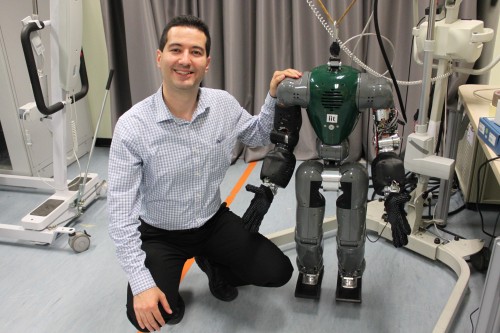

The COMAN robot is a compliant humanoid robot which is currently under development by the Advanced Robotics dept. of the Italian Institute of Technology in Genoa, Italy.

COMAN stands for “COmpliant huMANoid”, because this robot is designed with passive compliance (via springs) in his joints. This allows it to be more robust to environment perturbations (e.g. walking on uneven ground), to be safer for human-robot interaction (soft to touch), to be more energy-efficient, and to perform more dynamic motions (e.g. jumping, running).

COMAN can also be interpreted as Co-Man, meaning a co-worker, a robot which is a partner to humans, designed for safe physical human-robot interaction. The robot’s design is derived from the compliant joint design of the cCub bipedal robot.

This is a close-up of the passively-compliant legs of the robot:

Below is a video of the COMAN walking experiment I did together with Barkan Ugurlu and Nikos Tsagarakis. The goal was to learn to minimize the energy consumption used for walking by COMAN. This video accompanies my IROS 2011 paper presented in San Francisco, in September 2011.

We present a learning-based approach for minimizing the electric energy consumption during walking of a passively-compliant bipedal robot. The energy consumption is reduced by learning a varying-height center-of-mass trajectory which uses efficiently the robot’s passive compliance. To do this, we propose a reinforcement learning method which evolves the policy parameterization dynamically during the learning process and thus manages to find better policies faster than by using fixed parameterization. The method is first tested on a function approximation task, and then applied to the humanoid robot COMAN where it achieves significant energy reduction.

Link to publication:

Kormushev, P., Ugurlu, B., Calinon, S., Tsagarakis, N., and Caldwell, D.G., “Bipedal Walking Energy Minimization by Reinforcement Learning with Evolving Policy Parameterization“, IEEE/RSJ Intl Conf. on Intelligent Robots and Systems (IROS-2011), San Francisco, 2011. [pdf] [bibtex]

This is an older prototype of COMAN, in July 2012: